- A Priority queue is an ADT in which we store data where each data has a priority.

- The priority is usually a number, but it could be anything that enables us to order the keys, such as letters.

- A priority queue has 2 operations: insert(k,e), insert an element e with a value k. removeMin() remove and return the element with the lowest key.

- Sometimes we'll throw in changeKey(k,e), and remove(e), which change the key value for an element, and remove the element.

- We can sort with a priority queue: take the stuff, add all to the priority queue, remove one at a time.

- How long does that take? depends on the implementations

- Sorted Linked List

- insert(e,k): find the location \( \in\) O(n), insert at that spot \(\in\) O(1), total: O(n).

- removeMin(): find the location \(\in\) O(1), remove it \(\in\) O(1), total: O(1).

- Unsorted Linked List

- insert(e,k): find the location (add to front) \(\in\) O(1), insert at that spot \(\in\) O(1), total: O(1).

- removeMin(): find the location \(\in\) O(n), remove it \(\in\) O(1), total: O(n).

- Sorted Array

- insert(e,k): find the location (binary search) \(\in\) O(lg n), insert at that spot and shift \(\in\) O(n), total: O(n).

- removeMin(): find the location \(\in\) O(1), remove it (circular array) \(\in\) O(1), total: O(1).

- Unsorted Array

- insert(e,k): find the location (add to end) \(\in\) O(1), insert at that spot \(\in\) O(1), total: O(1).

- removeMin(): find the location \(\in\) O(n), remove it (and shift) \(\in\) O(n), total: O(n).

- Revisit PQ Sort with sorted and unsorted arrays.

- PQ Sort + sorted array = insertion sort.

- PQ Sort + unsorted array = selection sort.

- A heap is a binary tree where:

- Every node has an element and a key.

- and every node has the lowest key of the sub-tree rooted at that node.

- i.e. a node has a higher priority than its descendants.

- The root has highest priority.

- Lets assume our heap is complete. If we do that then the tree is as bushy as possible. if it is as bushy as possible, it is as short as possible. How short? \(O(log(n))\) short.

- That's important. If the height is \(O(log(n))\), and we can do our priority queue operations in \(O(height)\), then we can do our operations in \(O(log(n))\), which is much better than \(O(n)\) using the implementations we talked about before.

- Implementing the heap:

- All our operations, removeMin(), insert(), changeKey(), and remove() need to make sure the the tree remains complete.

- If the tree is complete, what implementation should we use? Array, because it's simple to implement and won't waste space.

- lets add 7 to this heap:

- to make it complete, we want to add the node as the left child of 71.

- If we use the array implementation, this would be the first empty cell, yes?

- but now we've broken the heap property. Can we fix it? sure! Since 7 < 71, lets just swap 7 and 71.

- It's still not a heap, so lets swap 7 with 15. Still not a heap, so swap 7 with 13. Now its a heap.

- So the process is: add new node in the next available space; bubble it up to the correct depth to preserve heapiness, and we're done with insert.

- How do you know that when you bubble up a level, you won't have

to bubble it back down? If we have a parent p and 2 children: lc and

rc, where lc is what we're bubbling up, then the p-rc relationship

already obeys the heap property, so key(p) < key(rc). If we bubble

up lc, the key(lc) < key(p). Therefore key(lc) < key(rc), and we

will not need to bubble back down.

def parent(loc): return loc // 2 def insertItem(heap, item, key): # Insert at the end of the heap heap.append((key, item)) # Get the location of the newly inserted item loc = len(heap) - 1 # Bubble up the item if it is smaller than its parent while loc != 0 and heap[parent(loc)][0] > key: # Swap the item and its parent heap[loc], heap[parent(loc)] = heap[parent(loc)], heap[loc] # Move to the parent location loc = parent(loc) - Try adding 10.

- How long does this take? How many times does it go through the loop? Until we get from the leaves to the root, i.e. the height of the tree. And since the tree is complete, the height is O(log(n)).

- What about removeMin?

- To removeMin, we take out and return 7, but now we have nothing at the root- we need to fill that gap.

- The easiest way to fill the gap and remain complete, with no gaps in our array, is to fill the gap with the last element of the array (the 71).

- Now, of course, the heap property has been violated, so we need to fix it.

- Instead of "bubbling up" we need to go the other direction, and

bubble down. We swap the node with one of the children until we

reach a leaf.

def removeMin(): ret = element(root()) insertAtRoot(last()) deleteLast() loc = root while isInternal(loc) && ((key(loc) > key(left(loc))) || (key(loc)> key(right(loc)))): if not hasRight(loc) || (key(left(loc)) < key(right(loc))) s = left(loc) else: s= right(loc) swap(loc,s) loc = right(loc) return ret - How long does this take? How many times through the loop? Until we get from the root to the leaves. Since the height of the tree is \(O(log(n))\), removeMin is \(O(log(n))\)!

- So what about changeKey? If we make the key larger, we bubble down as with removeMin, taking O(log(n)). If we make the key smaller, we bubble up as with insert, taking O(log(n)).

- How does this compare to the list based implementations? It is faster because log(n) is such an improvement over n that the constant time removeMin operations don't usually make up for it.'

- THAT is extra cool.

- First we do n inserts, each taking \(O(log(n))\), for a total of \(O(n log(n))\).

- Then we do n removeMins, each taking \(O(log(n))\), for a total of \(O(n log(n))\).

- So the total time is \(2*O(n log(n))\), or just \(O(n log(n))\)

- Take a moment to reflect on that. The best sort you've ever seen in \(O(n^2)\). If you're a mortal human like the rest of us, then the best sort you've ever conceived of is \(O(n^2)\). Here's a sort that is just so fundamentally faster that all the sorts you knew before are unusable, yet it came about from trying to solve a different problem. This is the first bit of true computer science you've seen. Everything the went before this moment was just background. Everything that comes after is CS. and just think, it only took you a year to get here...

- Recall that when we first learned our sorts (bubble, insertion, selection) all the action took place in the array the numbers were initially stored in. But with heap sort, we pull them out of our original array, stuff em into a heap, and then jam em back into the array. This takes extra space. Wouldn't it be nice if we could do heap sort "in-place" so we wouldn't need extra space?

- Of course it would! Our model will be the same. We will have a

sliding bar that will demarcate the border between the heaped portion

of the array and the as yet un-heaped portion. Take the following array:

- | 13 51 15 21 88 71 54 60 55 83 29

everything to the right of the | is not yet heaped. First add 13 to the heap: - 13 | 51 15 21 88 71 54 60 55 83 29

Now add the 51 - 13 51 | 15 21 88 71 54 60 55 83 29

Now add the 15 - 13 51 15 | 21 88 71 54 60 55 83 29

Now add the 21 - 13 51 15 21 | 88 71 54 60 55 83 29

But this isn't a heap, so bubble up - 13 21 15 51 | 88 71 54 60 55 83 29

Now add the 88: - 13 21 15 51 88 | 71 54 60 55 83 29

Now add the 71: - 13 21 15 51 88 71 | 54 60 55 83 29

Now add the 54: - 13 21 15 51 88 71 54 | 60 55 83 29

Now add the 60: - 13 21 15 51 88 71 54 60 | 55 83 29

Now add the 55: - 13 21 15 51 88 71 54 60 55 | 83 29

Now add the 83: - 13 21 15 51 88 71 54 60 55 83 | 29

Not a heap, so bubble up: - 13 21 15 51 83 71 54 60 55 88 | 29

Add the 29: - 13 21 15 51 83 71 54 60 55 88 29 |

Not a heap, so bubble up: - 13 21 15 51 29 71 54 60 55 88 83 |

- | 13 51 15 21 88 71 54 60 55 83 29

- Now we repeatedly remove the min. Remember that when we remove

the min, we free up the last slot in the heap. We can put the min in

there.

- 13 21 15 51 29 71 54 60 55 88 83 |

Remove 13, swap in 83, put 13 in the sorted array: - 83 21 15 51 29 71 54 60 55 88 | 13

Not a heap, so bubble down: - 15 21 54 51 29 71 83 60 55 88 | 13

Remove 15, swap in 88: - 88 21 54 51 29 71 83 60 55 | 15 13

Not a heap, so bubble down. - 21 29 54 51 88 71 83 60 55 | 15 13

Remove 21, swap in 55: - 55 29 54 51 88 71 83 60 | 21 15 13

Not a heap, so bubble down. - 29 51 54 55 88 71 83 60 | 21 15 13

Remove 29, swap in 60: - 60 51 54 55 88 71 83 | 29 21 15 13

Not a heap, so bubble down. - 51 55 54 60 88 71 83 | 29 21 15 13

Remove 51, swap in 83: - 83 55 54 60 88 71 | 51 29 21 15 13

Not a heap, so bubble down. - 54 55 71 60 88 83 | 51 29 21 15 13

Remove 54, swap in 83: - 83 55 71 60 88 | 54 51 29 21 15 13

Not a heap, so bubble down. - 55 60 71 83 88 | 54 51 29 21 15 13

Remove 55, swap in 88: - 88 60 71 83 | 55 54 51 29 21 15 13

Not a heap, so bubble down. - 60 83 71 88 | 55 54 51 29 21 15 13

Remove 60, swap in 88: - 88 83 71 | 60 55 54 51 29 21 15 13

Not a heap, so bubble down. - 71 83 88 | 60 55 54 51 29 21 15 13

Remove 71, swap in 88: - 88 83 | 71 60 55 54 51 29 21 15 13

Not a heap, so bubble down. - 83 88 | 71 60 55 54 51 29 21 15 13

Remove 83, swap in 88: - 88 | 83 71 60 55 54 51 29 21 15 13

Remove 88: - | 88 83 71 60 55 54 51 29 21 15 13

(thanks to MIDN Zachary P. Westlake for being the first to catch the error, now corrected)

- 13 21 15 51 29 71 54 60 55 88 83 |

- Of course we have this in reverse order. How could we have done this so the numbers were in increasing order?

- As we now know, to build a heap takes O(n log(n)), because there are n inserts, each taking log(n). But it turns out that IF we have all the elements at the start, be can heapify them faster.

- Lets start w/ 15 items:

13 21 15 51 83 29 54 60 55 88 71 61 17 67 44 - We'll take the ceiling of n/2 of them (8) and make 8 tiny heaps.

13 21 15 51 83 29 54 60 - Now we take half the remaining elements (ceilinged- in this case

4), and make them roots of new heaps that merge the 8 original

heaps:

55 88 71 61 13 21 15 51 83 29 54 60 - Of course, these need fixin':

13 15 29 54 55 21 88 51 83 71 61 60 - Repeat this process with the next 2:

17 67 13 15 29 54 55 21 88 51 83 71 61 60 - and then fix:

13 29 17 15 67 54 55 21 88 51 83 71 61 60 - and finally,

44 13 29 17 15 67 54 55 21 88 51 83 71 61 60 - fix

13 15 29 17 44 67 54 55 21 88 51 83 71 61 60 - and we have our heap.

- But the real question is how long does this take?

- We started with n/2 heaps, with no fixing to do.

- Then we had n/4 heaps, each heap might need fixin and the number of fixin steps would be one.

- Then we had n/8 heaps, each heap might need fixin and the number of fixin steps might be 2.

- etc.

- this gives us:

n/2 + n/2*0 + (for the first round, make n/2 heaps, fix n/2 heaps in 0 steps)

n/4 + n/4*1 + (for the second round, make n/4 heaps, fix n/4 heaps in 1 step)

n/8 + n/8*2 + (for the third round, make n/8 heaps, fix n/8 heaps in 2 steps)

...

1 + 1*⌊log(n)⌋ (for the last round, make 1 heap, fix that 1 heap in log(n) steps. - if we re-arrange these, we get:

n/2 + n/4 + n/8 +...+ 2 + 1 + (for all the heap making)

0*n/2 + 1*n/4 + 2*n/8 + ...+ 1*⌊log(n)⌋. (for all the fixin) - That first part (the making of the heaps) adds up to O(n), doesn't it? Let n = 32. 16+8+4+2+1 = 31

- So now we have: O(n) + 0*n/2 + 1*n/4 + 2*n/8 + ... + ⌊log(n)⌋*1. (the fixin of the heaps)

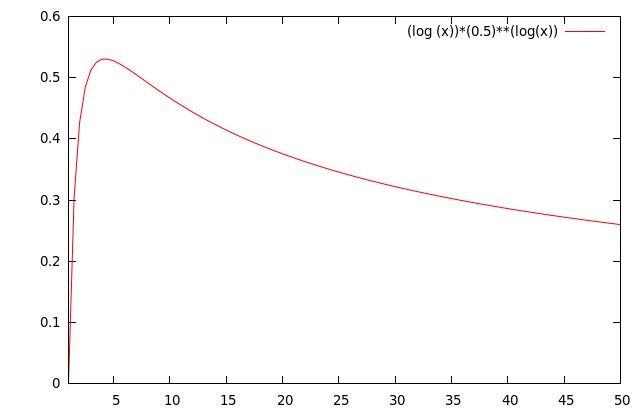

- The second part starting at 0*n/2 can be written as: (Remember that 2log(x)=x) \[\begin{align} \sum_{i=0}^{\lfloor\log n\rfloor} i\frac{n}{2^{i+1}}&= n\sum_{i=0}^{\lfloor\log n\rfloor} i\frac{1}{2^{i+1}}\\ &=n\sum_{i=0}^{\lfloor\log n\rfloor} i \Biggl(\frac{1}{2}\Biggr)^{i+1}\\ \text{Let } x=\lfloor\log n\rfloor, r= \frac{1}{2}\\ &=n\sum_{i=0}^{x}ir^{i+1}\\ \text{Let } S &= \sum_{i=0}^{x}ir^{i+1}\\ &= r^2 + 2r^3 + 3 r^4 + ... + (x-1)r^x + x r^{x+1}\\ rS&=r^3 + 2r^4 + 3 r^5 + ... + (x-1)r^{x+1} + x r^{x+2}\\ S-rS &= r^2 + r^3 + r^4 + r^5 + ... + r^{x+1} - x r^{x+2}\\ \text{Let } T &= r^2 + r^3 + r^4 + r^5 + ... + r^{x+1}\\ rT &= r^3 + r^4 + r^5 + ... + r^{x+1} + r^{x+2}\\ T-rT &= r^2 - r^{x+2}\\ T(1 - r) &= r^2 - r^{x+2}\\ T &= \frac{r^2 - r^{x+2}}{1-r}\\ S-rS &= \frac{r^2 - r^{x+2}}{1-r}- x r^{x+2}\\ S(1-r) &= \frac{r^2 - r^{x+2}}{1-r}- x r^{x+2}\\ S &= \frac{r^2 - r^{x+2}}{(1-r)^2}- \frac{x r^{x+2}}{1-r}\\ &= \frac{\frac{1}{4} - \frac{1}{2}^{\lfloor\log n\rfloor+2}}{\frac{1}{4}}- \frac{\lfloor\log n\rfloor \frac{1}{2}^{\lfloor\log n\rfloor+2}}{\frac{1}{2}}\\ \end{align} \]

- What happens to this as n grows? In the first term, as n grows

\(\frac{1}{2}^{\lfloor\log n\rfloor+2}\) goes to 0, making the

whole fraction approach 1.

In the second term, \(\lfloor\log n\rfloor \frac{1}{2}^{\lfloor\log n\rfloor+2}\) (the numerator) will approach 0 in the limit:

Thus S approaches 1 as n grows, \(O(1)\). - Remember that this was just the summation part, we still had an \(n\) we factored out, so the fixing portion takes overall \(O(n)\).

- Therefore building a heap bottom up takes \(O(n)\).